News

Doubling Compute Capacity for Data-Intensive Physics with Microsoft Azure

Published December 15, 2021

Cynthia Dillon, SDSC Communications

The San Diego Supercomputer Center (SDSC) at UC San Diego recently teamed up with the Open Science Grid (OSG) to use Microsoft Azure Cloud Services to expedite a set of high-profile data analyses in particle physics. The outcome more than doubled the compute capacity UC San Diego is providing to the Compact Muon Solenoid (CMS) collaboration.

The CMS experiment at CERN’s Large Hadron Collider (LHC) is one of the largest data producers in the scientific world. Its standard data products are centrally produced and used frequently by competing teams within the collaboration, which is made up of more than 200 institutions in 40 countries. The OSG is a federated infrastructure allowing many independent resource providers to serve various independent user communities.

Teaming up SDSC and OSG to use Azure resulted in half a dozen CMS collaborators at UC San Diego, UC Santa Barbara, Boston University and Baylor University accomplishing in a few days what would normally take them several weeks. This in turn motivated them to pursue more studies that they would not have endeavored given the compute-intensive nature of the projects. Cloud integration both accelerated the science and allowed researchers to pursue science that otherwise would have been out of reach.

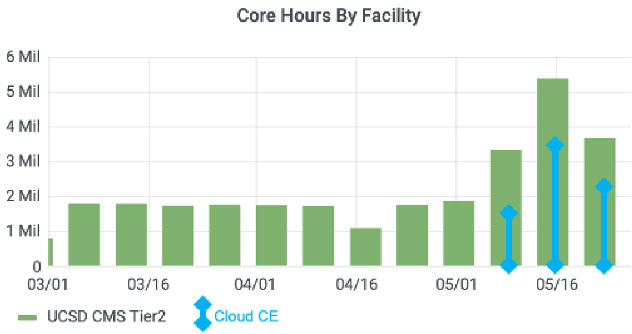

Screenshot of the weekly OSG accounting for UC San Diego-associated CMS resources. Figure courtesy of Igor Sfiligoi, SDSC

“Our collaborators already had access to many on-premises resource providers through OSG, so adding commercial clouds as resource providers was a natural evolution,” explained SDSC’s Igor Sfiligoi, first author of a preprint paper titled, “Data intensive physics analysis in Azure cloud,” co-authored by Würthwein and Diego Davila and recently presented at the Fourth International Conference on Computer Networks, Big Data and IoT (Internet of Things). “The OSG technology allows for easy integration of cloud compute resources, and dedicated data caching infrastructure ensures high efficiency for the data-intensive CMS compute jobs.”

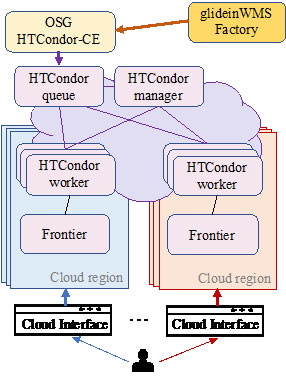

According to Würthwein, OSG provides the necessary trust and technical mechanisms – the glue – that allows seamless integration of the many resource providers and user communities without combinatorial issues. He explained that in OSG all resource provisioning details are abstracted behind a portal – the Compute Entrypoint (CE).

“The portal implementation used is HTCondor-CE and relies on a batch system paradigm, translating global requests into local ones. After successful authentication, the presented credential is mapped to a local system account and all further authorization and policy management is handled in the local account domain," said Würthwein. "Several backend batch systems are supported; we used HTCondor for this work, due to its proven scalability and extreme flexibility.”

Overview of a cloud-enabled OSG CE. Image courtesy of Igor Sfiligoi, SDSC

According to Sfiligoi, given the data-intensive nature of most CMS analyses, the remote nature of cloud resources required the deployment of content delivery network services in Azure to minimize data-access related inefficiencies. This was particularly urgent given the use of multiple cloud regions, spanning both the U.S. and European locations. The caches used performed greatly, keeping the central processing unit (CPU) utilization on par with "on-prem" resources.

The peer-reviewed final version of Sfiligoi’s, Würthwien’s and Davila’s paper is expected to be published in the Springer Proceedings, Lecture Notes on Data Engineering and Communications Technologies, in an issue provisionally entitled, “Computer Networks, Big Data and IoT,” sometime in 2022.

This work was supported in part by the National Science Foundation (grants OAC-2030508, MPS-1148698, OAC-1826967, OAC-1836650, OAC-1541349, PHY-1624356, and CNS-1925001) and credits provided by Microsoft.